So you've decided to get on the continuous delivery train. Congratulations! You're about to turn your deployments from anxiety-inducing to yawn-inducing, which is a luxury non-CD shops will never know. Will it be easy in the short term? Well, let's put it this way: there will need to be some changes to how you approach things. And you'll have to convince your colleagues to change the way they approach things, too. But there are steps you can take to make this transition easier for all. That's what we'll talk about today—your path to successfully doing continuous delivery.

What CD Even Is, Though

First, let me give you a quick summary of the subject at hand. Continuous delivery means packaging every significant code change and pushing it through an automated pipeline of steps until it reaches production. Commonly, these steps act as gatekeepers to the Great Beyond of your prod environment. They're your portcullises and moats. The job of these gatekeepers is to ensure your code is truly ready to enter the wild. Common steps include running automated unit tests, acceptance tests, and smoke tests. A continuous delivery pipeline also includes promoting your package to higher environments and smoke testing them. This is all done so we can eventually make deployments so uneventful that they're boring, reducing risk and saving a boatload of cash and frustration.

How Do We Get There?

If you've read the book Continuous Delivery by Jez Humble and David Farley or if you've perused a few blogs, you may feel overwhelmed at first. Continuous delivery can be a lot to take in. But fret not. We will eat this elephant one step at a time, making it a bearable and possibly fun process.

The principles we'll follow, straight out of Humble and Farley's Continuous Delivery, are to document our steps, continue with those steps even if—especially if—they become painful, and then automate them away. This requires a large dose of tenacity. You have to be willing to stick with it, possibly for an extended period of time. In my experience, this tenacity almost always pays off, often faster than you may think.

Document Your Existing Steps

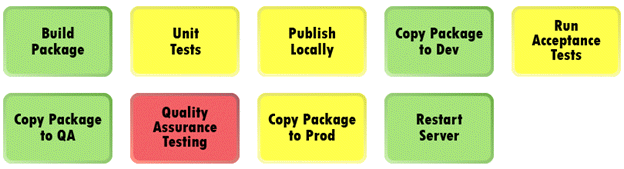

Starting your journey is as simple as documenting all the steps it takes your system to go from code that's committed and pushed to when it's in production and available to consumers. And yes—you need to document every step. You'll be surprised at how many there are. Pull everyone involved as you need them. Any gaps in the documentation must be fleshed out. Talk to your developers, your QA specialists, your release manager, etc. Talk to your system administrator if you need to. Get it all in one visualization. By the end you should have something like this:

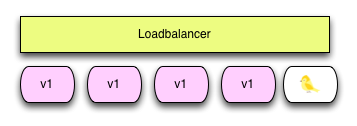

Make sure you understand who owns or commonly performs each step. Sometimes this is a system, but more often, it's a person. If you have trouble showing or understanding the steps, think of your pipeline as a conveyor belt with one thing moving through it: the software package. You may decorate this package with other things, like config files. It may also morph into a different kind of package—for instance, from an executable into a Docker container. But it's still one thing moving through each gated step.

Make Friends

Ultimately, continuous delivery and DevOps is not so much about the tooling but about the people. It's about collaboration and focusing on what matters, and it's about delegating boring deployment work to computers. However, not everyone may take that view. Many people have built up little kingdoms around their role in getting the code to production. They may see your initiative to automate as a threat.

You'll want to understand and have compassion for all the people involved in deploying your system. This may be as easy as giving a heads up. It may be as involved as being vulnerable with them and letting them share their concerns. And at the end of it, you may still have to add a silly button to your deployment server and let them push it. Just remember: it's as much about the people as it is the tooling.

Version Your Package

Ensure your software packages are versioned. You want to know you can grab any build you need and push it through your pipeline. This will make both troubleshooting and tracking easier. Also, ensure that your package is IT & Test Environment agnostic; that is, don't tie your built package to any specific environment, such as dev or prod. We'll wire in the environment-specific stuff later.

Publish Your Package

After you build, version, and unit test your package, publish it to a well-known place. This will make your package available to deploy to multiple environments without rebuilding and unit testing every time. It will also ensure you have a consistent build. You can use something as simple as a shared network drive, but many tools exist to make it easier. Maven and Gradle have the ability to publish built in. Many continuous integration servers also have some sort of publishing mechanism wired in, depending on your language.

Find the Biggest Pain Point

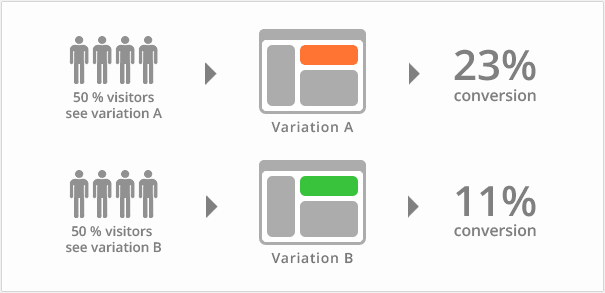

Now the fun part. Document what the biggest pain points are on your diagram, like I did here:

Green is already automated or low pain, and red is the highest pain. You want to ensure the team is doing this painful step as much as possible. The instinct for them will be to run away from it and avoid it. Instead, we want to equip the team with what they need to get rid of it.

Automate the Pain Away

The next step in our path to continuous delivery is to take the pain point from the previous step and figure out how to automate it. There are many, many tools available, depending on the step that's red for you. For testing steps, you can automate your tests. Use a unit testing framework, or Postman, or a more comprehensive testing tool. This is another place where people, namely QA specialists, may think of moving to CD as a threat. It also can be a whole initiative on its own.

For deployment steps, there are many tools to automate the publishing and pushing of your system onto a server. I highly recommend investing in a deployment server. It will save you loads of time automating your pipeline. However, if you're not confident in one or have budget troubles, you can automate with as little as your command shell and some SSH. Something more in the middle can be a task runner like Gradle or even some PowerShell modules. Parameterize these scripts by version number and environment.

You may not be able to automate your most painful step, or you may find it a steep learning curve. That's alright. If it's too difficult, find something similar to start with. You can also mitigate the manual parts down to a few button clicks. That helps. As long as you can remove enough of the pain your red step causes to make it no longer the most painful one, you're moving in the right direction.

Rinse, Repeat

Aggressively and continuously repeat the previous steps. Once you automate most of your painful steps away, your entire team will have an amazing change of attitude when it comes to deployments. It will feel like a large weight has been lifted off your shoulders. Ideally, with one button push, you can get your system from your package repository all the way to production. Most likely, you'll need to press one button per environment. Even then, it'll still be a breath of fresh air compared to the way it was before CD. Nonetheless, you'll want to keep automating until you achieve the one-button-push deployment.

I'm Done Now, Right?

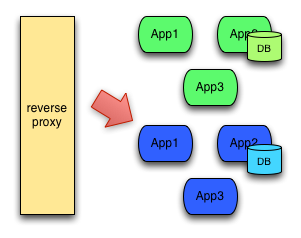

Even though your deployment life will be much easier, I wouldn't stop there. Once you have an effective deployment pipeline, you can do many beautiful things with it. You can make it a zero-downtime deploy. Your team can add feature toggles so you can separate your deployments from your releases. And of course, you should continue to refine and evolve the pipeline as you find new or smaller pain points along the way.

You're on Your Way

As you can see, achieving continuous delivery is well within your reach. It's simple, yet you must persist through all the blockages. Focus on people and showing them how continuous delivery eases their role. Continually and aggressively knock one obstacle down at a time, and you'll be there sooner than you think!

Author Mark Henke.

Mark has spent over 10 years architecting systems that talk to other systems, doing DevOps before it was cool, and matching software to its business function. Every developer is a leader of something on their team, and he wants to help them see that.